Syntax

A dgsh script follows the syntax of a bash(1) shell script with the addition of multipipe blocks. A multipipe block contains one or more dgsh simple commands, other multipipe blocks, or pipelines of the previous two types of commands. The commands in a multipipe block are executed asynchronously (in parallel, in the background). Data may be redirected or piped into and out of a multipipe block. With multipipe blocks dgsh scripts form directed acyclic process graphs. It follows from the above description that multipipe blocks can be recursively composed.

As a simple example consider running the following command directly within dgsh

{{ echo hello & echo world & }} | paste

or by invoking dgsh with the command as an argument.

dgsh -c '{{ echo hello & echo world & }} | paste'

The command will run paste with input from the twoecho processes to output hello world.

This is equivalent to running the following bash command,

but with the flow of data appearing in the natural left-to-right order.

pasteIn the following larger example, which implements a directory listing similar to that of the Windows DIR command, the output of ls is distributed to six commands:awk, which sums the number of bytes and passes the result to the tr command that deletes newline characters,wc, which counts the number of files,awk, which counts the number of bytes,grep, which counts the number of directories and passes the result to the tr command that deletes newline characters, three

echocommands, which provide the headers of the data output by the commands described above. All six commands pass their output to thecatcommand, which gathers their outputs in order.FREE=$(df -h . | awk '!/Use%/{print $4}') ls -n | tee | {{ # Reorder fields in DIR-like way awk '!/^total/ {print $6, $7, $8, $1, sprintf("%8d", $5), $9}' & # Count number of files wc -l | tr -d \\n & # Print label for number of files echo -n ' File(s) ' & # Tally number of bytes awk '{s += $5} END {printf("%d bytes\n", s)}' & # Count number of directories grep -c '^d' | tr -d \\n & # Print label for number of dirs and calculate free bytes echo " Dir(s) $FREE bytes free" & }} | catFormally, dgsh extends the syntax of the (modified) Unix Bourne-shell when

bashprovided with the--dgshargument as follows.<dgsh_block> ::= '{{' <dgsh_list> '}}'<dgsh_list> ::= <dgsh_list_item> '&'<dgsh_list_item> <dgsh_list><dgsh_list_item> ::= <simple_command><dgsh_block><dgsh_list_item> '|' <dgsh_list_item>

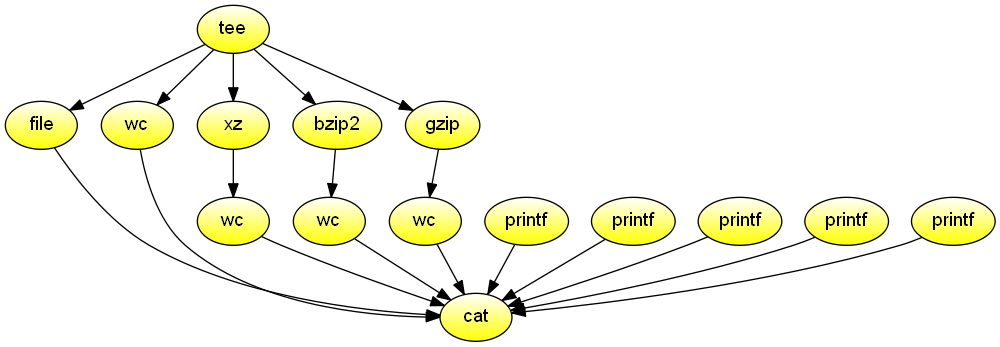

Compression benchmark

Report file type, length, and compression performance for data received from the standard input. The data never touches the disk. Demonstrates the use of an output multipipe to source many commands from one followed by an input multipipe to sink to one command the output of many and the use of dgsh-tee that is used both to propagate the same input to many commands and collect output from many commands orderly in a way that is transparent to users.

#!/usr/bin/env dgsh

tee |

{{

echo -n 'File type:' &

file - &

echo -n 'Original size:' &

wc -c &

echo -n 'xz:' &

xz -c | wc -c &

echo -n 'bzip2:' &

bzip2 -c | wc -c &

echo -n 'gzip:' &

gzip -c | wc -c &

}} |

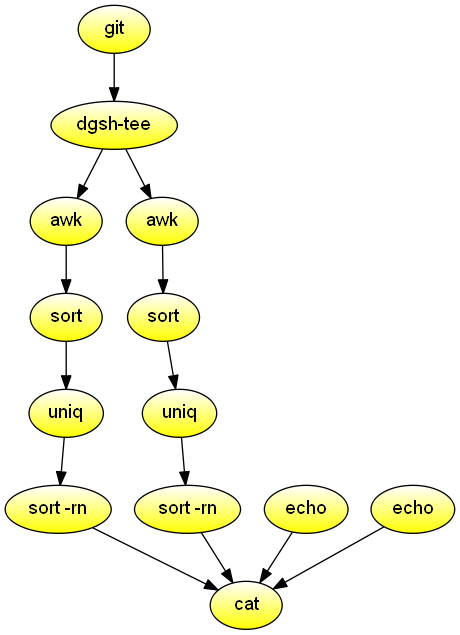

catGit commit statistics

Process the git history, and list the authors and days of the week ordered by the number of their commits. Demonstrates streams and piping through a function.

#!/usr/bin/env dgsh

forder()

{

sort |

uniq -c |

sort -rn

}

export -f forder

git log --format="%an:%ad" --date=default "$@" |

tee |

{{

echo "Authors ordered by number of commits" &

# Order by frequency

awk -F: '{print $1}' |

call 'forder' &

echo "Days ordered by number of commits" &

# Order by frequency

awk -F: '{print substr($2, 1, 3)}' |

call 'forder' &

}} |

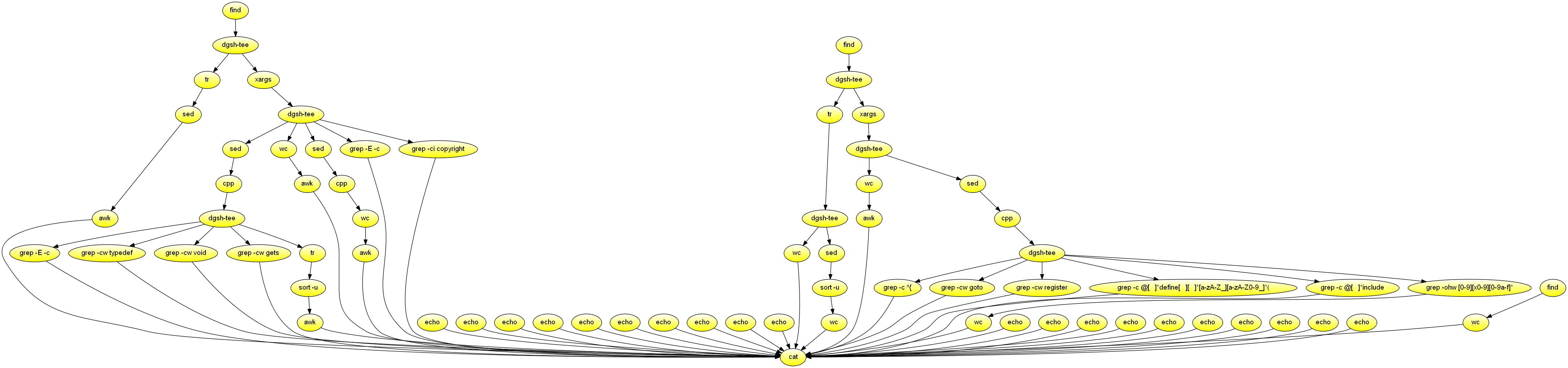

catC code metrics

Process a directory containing C source code, and produce a summary of various metrics. Demonstrates nesting, commands without input.

#!/usr/bin/env dgsh

{{

# C and header code

find "$@" \( -name \*.c -or -name \*.h \) -type f -print0 |

tee |

{{

# Average file name length

# Convert to newline separation for counting

echo -n 'FNAMELEN: ' &

tr \\0 \\n |

# Remove path

sed 's|^.*/||' |

# Maintain average

awk '{s += length($1); n++} END {

if (n>0)

print s / n;

else

print 0; }' &

xargs -0 /bin/cat |

tee |

{{

# Remove strings and comments

sed 's/#/@/g;s/\\[\\"'\'']/@/g;s/"[^"]*"/""/g;'"s/'[^']*'/''/g" |

cpp -P 2>/dev/null |

tee |

{{

# Structure definitions

echo -n 'NSTRUCT: ' &

egrep -c 'struct[ ]*{|struct[ ]*[a-zA-Z_][a-zA-Z0-9_]*[ ]*{' &

#}} (match preceding openings)

# Type definitions

echo -n 'NTYPEDEF: ' &

grep -cw typedef &

# Use of void

echo -n 'NVOID: ' &

grep -cw void &

# Use of gets

echo -n 'NGETS: ' &

grep -cw gets &

# Average identifier length

echo -n 'IDLEN: ' &

tr -cs 'A-Za-z0-9_' '\n' |

sort -u |

awk '/^[A-Za-z]/ { len += length($1); n++ } END {

if (n>0)

print len / n;

else

print 0; }' &

}} &

# Lines and characters

echo -n 'CHLINESCHAR: ' &

wc -lc |

awk '{OFS=":"; print $1, $2}' &

# Non-comment characters (rounded thousands)

# -traditional avoids expansion of tabs

# We round it to avoid failing due to minor

# differences between preprocessors in regression

# testing

echo -n 'NCCHAR: ' &

sed 's/#/@/g' |

cpp -traditional -P 2>/dev/null |

wc -c |

awk '{OFMT = "%.0f"; print $1/1000}' &

# Number of comments

echo -n 'NCOMMENT: ' &

egrep -c '/\*|//' &

# Occurences of the word Copyright

echo -n 'NCOPYRIGHT: ' &

grep -ci copyright &

}} &

}} &

# C files

find "$@" -name \*.c -type f -print0 |

tee |

{{

# Convert to newline separation for counting

tr \\0 \\n |

tee |

{{

# Number of C files

echo -n 'NCFILE: ' &

wc -l &

# Number of directories containing C files

echo -n 'NCDIR: ' &

sed 's,/[^/]*$,,;s,^.*/,,' |

sort -u |

wc -l &

}} &

# C code

xargs -0 /bin/cat |

tee |

{{

# Lines and characters

echo -n 'CLINESCHAR: ' &

wc -lc |

awk '{OFS=":"; print $1, $2}' &

# C code without comments and strings

sed 's/#/@/g;s/\\[\\"'\'']/@/g;s/"[^"]*"/""/g;'"s/'[^']*'/''/g" |

cpp -P 2>/dev/null |

tee |

{{

# Number of functions

echo -n 'NFUNCTION: ' &

grep -c '^{' &

# Number of gotos

echo -n 'NGOTO: ' &

grep -cw goto &

# Occurrences of the register keyword

echo -n 'NREGISTER: ' &

grep -cw register &

# Number of macro definitions

echo -n 'NMACRO: ' &

grep -c '@[ ]*define[ ][ ]*[a-zA-Z_][a-zA-Z0-9_]*(' &

# Number of include directives

echo -n 'NINCLUDE: ' &

grep -c '@[ ]*include' &

# Number of constants

echo -n 'NCONST: ' &

grep -ohw '[0-9][x0-9][0-9a-f]*' | wc -l &

}} &

}} &

}} &

# Header files

echo -n 'NHFILE: ' &

find "$@" -name \*.h -type f |

wc -l &

}} |

# Gather and print the results

cat

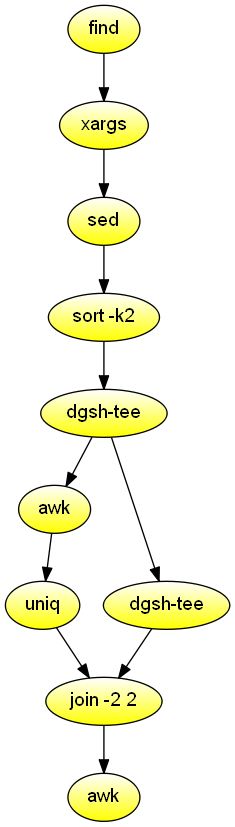

Find duplicate files

List the names of duplicate files in the specified directory. Demonstrates the combination of streams with a relational join.

#!/usr/bin/env dgsh

# Create list of files

find "$@" -type f |

# Produce lines of the form

# MD5(filename)= 811bfd4b5974f39e986ddc037e1899e7

xargs openssl md5 |

# Convert each line into a "filename md5sum" pair

sed 's/^MD5(//;s/)= / /' |

# Sort by MD5 sum

sort -k2 |

tee |

{{

# Print an MD5 sum for each file that appears more than once

awk '{print $2}' | uniq -d &

# Promote the stream to gather it

cat &

}} |

# Join the repeated MD5 sums with the corresponding file names

# Join expects two inputs, second will come from scatter

# XXX make streaming input identifiers transparent to users

join -2 2 |

# Output same files on a single line

awk '

BEGIN {ORS=""}

$1 != prev && prev {print "\n"}

END {if (prev) print "\n"}

{if (prev) print " "; prev = $1; print $2}'Highlight misspelled words

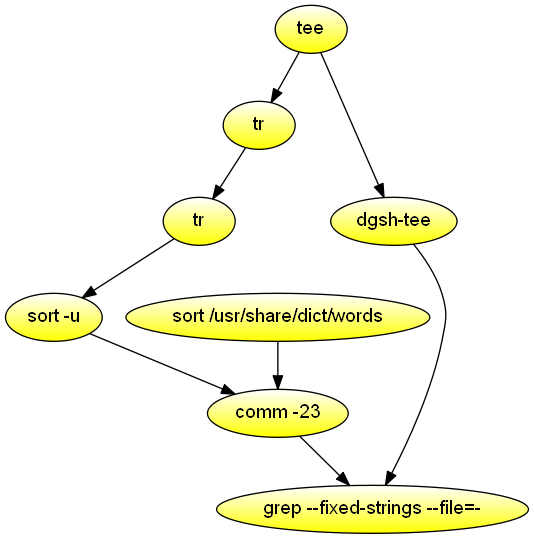

Highlight the words that are misspelled in the command's first argument. Demonstrates stream processing with multipipes and the avoidance of pass-through constructs to avoid deadlocks.

#!/usr/bin/env dgsh

export LC_ALL=C

tee |

{{

{{

# Find errors

tr -cs A-Za-z \\n |

tr A-Z a-z |

sort -u &

# Ensure dictionary is sorted consistently with our settings

sort /usr/share/dict/words &

}} |

comm -23 &

cat &

}} |

grep -F -f - -i --color -w -C 2Word properties

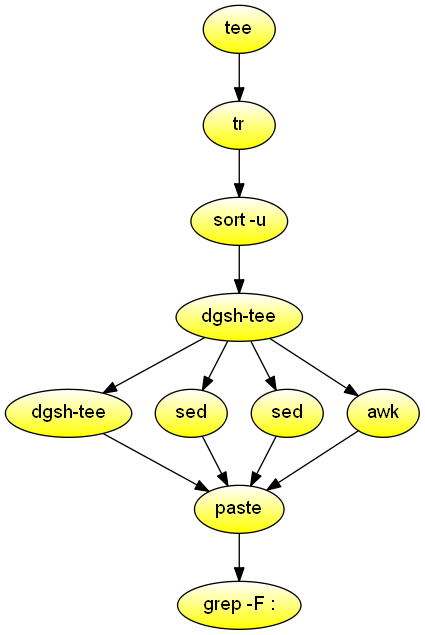

Read text from the standard input and list words containing a two-letter palindrome, words containing four consonants, and words longer than 12 characters.

#!/usr/bin/env dgsh

# Consistent sorting across machines

export LC_ALL=C

# Stream input from file

cat $1 |

# Split input one word per line

tr -cs a-zA-Z \\n |

# Create list of unique words

sort -u |

tee |

{{

# Pass through the original words

cat &

# List two-letter palindromes

sed 's/.*\(.\)\(.\)\2\1.*/p: \1\2-\2\1/;t

g' &

# List four consecutive consonants

sed -E 's/.*([^aeiouyAEIOUY]{4}).*/c: \1/;t

g' &

# List length of words longer than 12 characters

awk '{if (length($1) > 12) print "l:", length($1);

else print ""}' &

}} |

# Paste the four streams side-by-side

paste |

# List only words satisfying one or more properties

fgrep :Web log reporting

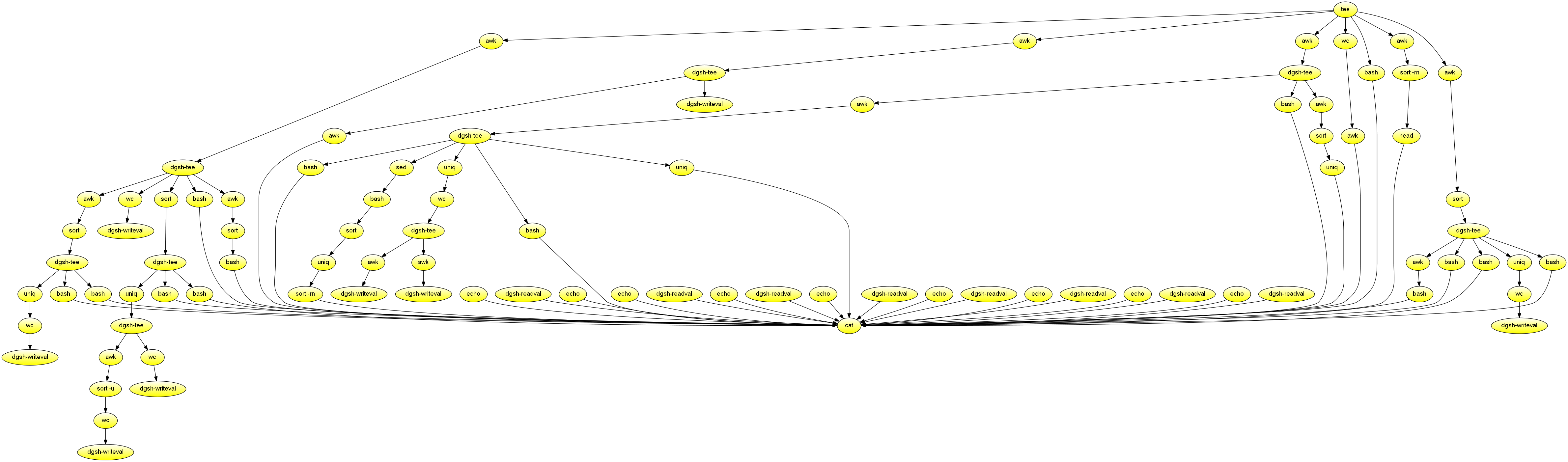

Creates a report for a fixed-size web log file read from the standard input. Demonstrates the combined use of multipipe blocks, writeval and readval to store and retrieve values, and functions in the scatter block. Used to measure throughput increase achieved through parallelism.

#!/usr/bin/env dgsh

# Output the top X elements of the input by the number of their occurrences

# X is the first argument

toplist()

{

uniq -c | sort -rn | head -$1

echo

}

# Output the argument as a section header

header()

{

echo

echo "$1"

echo "$1" | sed 's/./-/g'

}

# Consistent sorting

export LC_ALL=C

export -f toplist

export -f header

cat <<EOF

WWW server statistics

=====================

Summary

-------

EOF

tee |

{{

awk '{s += $NF} END {print s / 1024 / 1024 / 1024}' |

tee |

{{

# Number of transferred bytes

echo -n 'Number of Gbytes transferred: ' &

cat &

dgsh-writeval -s nXBytes &

}} &

# Number of log file bytes

echo -n 'MBytes log file size: ' &

wc -c |

awk '{print $1 / 1024 / 1024}' &

# Host names

awk '{print $1}' |

tee |

{{

wc -l |

tee |

{{

# Number of accesses

echo -n 'Number of accesses: ' &

cat &

dgsh-writeval -s nAccess &

}} &

# Sorted hosts

sort |

tee |

{{

# Unique hosts

uniq |

tee |

{{

# Number of hosts

echo -n 'Number of hosts: ' &

wc -l &

# Number of TLDs

echo -n 'Number of top level domains: ' &

awk -F. '$NF !~ /[0-9]/ {print $NF}' |

sort -u |

wc -l &

}} &

# Top 10 hosts

{{

call 'header "Top 10 Hosts"' &

call 'toplist 10' &

}} &

}} &

# Top 20 TLDs

{{

call 'header "Top 20 Level Domain Accesses"' &

awk -F. '$NF !~ /^[0-9]/ {print $NF}' |

sort |

call 'toplist 20' &

}} &

# Domains

awk -F. 'BEGIN {OFS = "."}

$NF !~ /^[0-9]/ {$1 = ""; print}' |

sort |

tee |

{{

# Number of domains

echo -n 'Number of domains: ' &

uniq |

wc -l &

# Top 10 domains

{{

call 'header "Top 10 Domains"' &

call 'toplist 10' &

}} &

}} &

}} &

# Hosts by volume

{{

call 'header "Top 10 Hosts by Transfer"' &

awk ' {bytes[$1] += $NF}

END {for (h in bytes) print bytes[h], h}' |

sort -rn |

head -10 &

}} &

# Sorted page name requests

awk '{print $7}' |

sort |

tee |

{{

# Top 20 area requests (input is already sorted)

{{

call 'header "Top 20 Area Requests"' &

awk -F/ '{print $2}' |

call 'toplist 20' &

}} &

# Number of different pages

echo -n 'Number of different pages: ' &

uniq |

wc -l &

# Top 20 requests

{{

call 'header "Top 20 Requests"' &

call 'toplist 20' &

}} &

}} &

# Access time: dd/mmm/yyyy:hh:mm:ss

awk '{print substr($4, 2)}' |

tee |

{{

# Just dates

awk -F: '{print $1}' |

tee |

{{

uniq |

wc -l |

tee |

{{

# Number of days

echo -n 'Number of days: ' &

cat &

#|store:nDays

echo -n 'Accesses per day: ' &

awk '

BEGIN {

"dgsh-readval -l -x -q -s nAccess" | getline NACCESS;}

{print NACCESS / $1}' &

echo -n 'MBytes per day: ' &

awk '

BEGIN {

"dgsh-readval -l -x -q -s nXBytes" | getline NXBYTES;}

{print NXBYTES / $1 / 1024 / 1024}' &

}} &

{{

call 'header "Accesses by Date"' &

uniq -c &

}} &

# Accesses by day of week

{{

call 'header "Accesses by Day of Week"' &

sed 's|/|-|g' |

call '(date -f - +%a 2>/dev/null || gdate -f - +%a)' |

sort |

uniq -c |

sort -rn &

}} &

}} &

# Hour

{{

call 'header "Accesses by Local Hour"' &

awk -F: '{print $2}' |

sort |

uniq -c &

}} &

}} &

}} |

catText properties

Read text from the standard input and create files containing word, character, digram, and trigram frequencies.

#!/usr/bin/env dgsh

# Consistent sorting across machines

export LC_ALL=C

# Convert input into a ranked frequency list

ranked_frequency()

{

awk '{count[$1]++} END {for (i in count) print count[i], i}' |

# We want the standard sort here

sort -rn

}

# Convert standard input to a ranked frequency list of specified n-grams

ngram()

{

local N=$1

perl -ne 'for ($i = 0; $i < length($_) - '$N'; $i++) {

print substr($_, $i, '$N'), "\n";

}' |

ranked_frequency

}

export -f ranked_frequency

export -f ngram

tee <$1 |

{{

# Split input one word per line

tr -cs a-zA-Z \\n |

tee |

{{

# Digram frequency

call 'ngram 2 >digram.txt' &

# Trigram frequency

call 'ngram 3 >trigram.txt' &

# Word frequency

call 'ranked_frequency >words.txt' &

}} &

# Store number of characters to use in awk below

wc -c |

dgsh-writeval -s nchars &

# Character frequency

sed 's/./&\

/g' |

# Print absolute

call 'ranked_frequency' |

awk 'BEGIN {

"dgsh-readval -l -x -q -s nchars" | getline NCHARS

OFMT = "%.2g%%"}

{print $1, $2, $1 / NCHARS * 100}' > character.txt &

}}C/C++ symbols that should be static

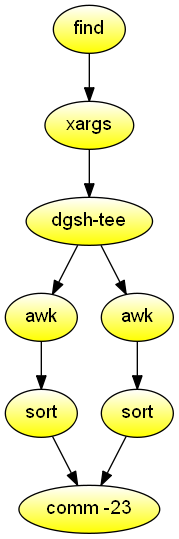

Given as an argument a directory containing object files, show which symbols are declared with global visibility, but should have been declared with file-local (static) visibility instead. Demonstrates the use of dgsh-capable comm (1) to combine data from two sources.

#!/usr/bin/env dgsh

# Find object files

find "$1" -name \*.o |

# Print defined symbols

xargs nm |

tee |

{{

# List all defined (exported) symbols

awk 'NF == 3 && $2 ~ /[A-Z]/ {print $3}' | sort &

# List all undefined (imported) symbols

awk '$1 == "U" {print $2}' | sort &

}} |

# Print exports that are not imported

comm -23

Hierarchy map

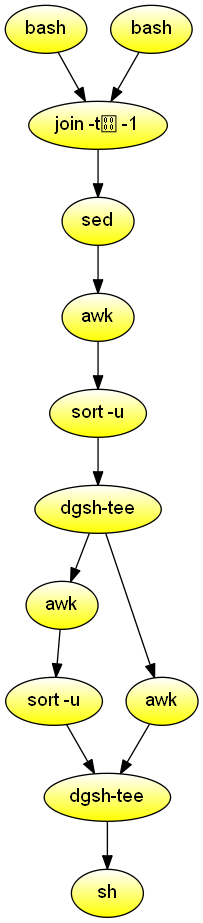

Given two directory hierarchies A and B passed as input arguments (where these represent a project at different parts of its lifetime) copy the files of hierarchy A to a new directory, passed as a third argument, corresponding to the structure of directories in B. Demonstrates the use of join to process results from two inputs and the use of gather to order asynchronously produced results.

#!/usr/bin/env dgsh

if [ ! -d "$1" -o ! -d "$2" -o -z "$3" ]

then

echo "Usage: $0 dir-1 dir-2 new-dir-name" 1>&2

exit 1

fi

NEWDIR="$3"

export LC_ALL=C

line_signatures()

{

find $1 -type f -name '*.[chly]' -print |

# Split path name into directory and file

sed 's|\(.*\)/\([^/]*\)|\1 \2|' |

while read dir file

do

# Print "directory filename content" of lines with

# at least one alphabetic character

# The fields are separated by and

sed -n "/[a-z]/s|^|$dir$file|p" "$dir/$file"

done |

# Error: multi-character tab '\001\001'

sort -T `pwd` -t -k 2

}

export -f line_signatures

{{

# Generate the signatures for the two hierarchies

call 'line_signatures "$1"' -- "$1" &

call 'line_signatures "$1"' -- "$2" &

}} |

# Join signatures on file name and content

join -t -1 2 -2 2 |

# Print filename dir1 dir2

sed 's///g' |

awk -F 'BEGIN{OFS=" "}{print $1, $3, $4}' |

# Unique occurrences

sort -u |

tee |

{{

# Commands to copy

awk '{print "mkdir -p '$NEWDIR'/" $3 ""}' |

sort -u &

awk '{print "cp " $2 "/" $1 " '$NEWDIR'/" $3 "/" $1 ""}' &

}} |

# Order: first make directories, then copy files

# TODO: dgsh-tee does not pass along first incoming stream

cat |

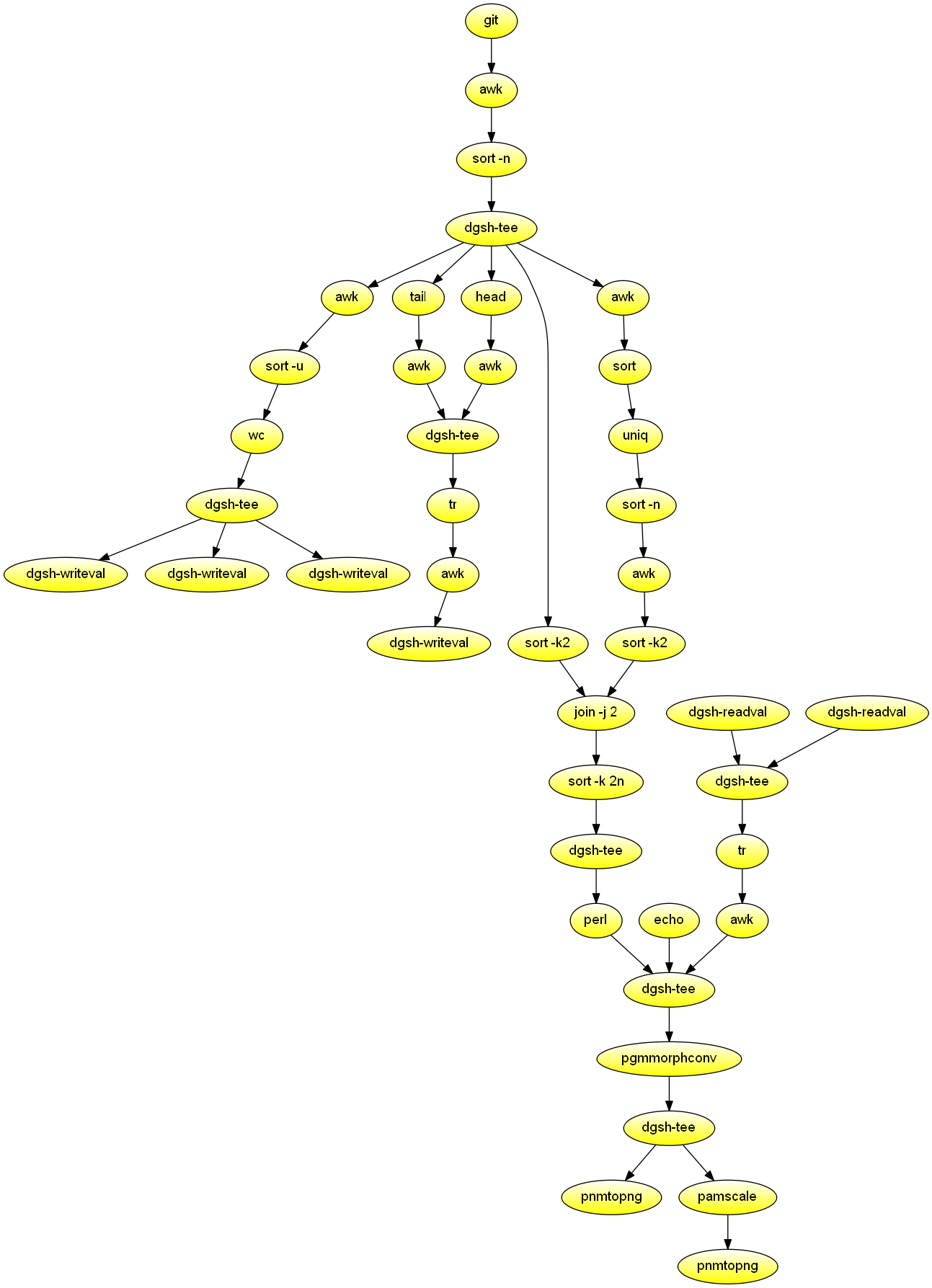

shPlot git committer activity over time

Process the git history, and create two PNG diagrams depicting committer activity over time. The most active committers appear at the center vertical of the diagram. Demonstrates image processing, mixining of synchronous and asynchronous processing in a scatter block, and the use of an dgsh-compliant join command.

#!/usr/bin/env dgsh

# Commit history in the form of ascending Unix timestamps, emails

git log --pretty=tformat:'%at %ae' |

# Filter records according to timestamp: keep (100000, now) seconds

awk 'NF == 2 && $1 > 100000 && $1 < '`date +%s` |

sort -n |

tee |

{{

{{

# Calculate number of committers

awk '{print $2}' |

sort -u |

wc -l |

tee |

{{

dgsh-writeval -s committers1 &

dgsh-writeval -s committers2 &

dgsh-writeval -s committers3 &

}} &

# Calculate last commit timestamp in seconds

tail -1 |

awk '{print $1}' &

# Calculate first commit timestamp in seconds

head -1 |

awk '{print $1}' &

}} |

# Gather last and first commit timestamp

tee |

# Make one space-delimeted record

tr '\n' ' ' |

# Compute the difference in days

awk '{print int(($1 - $2) / 60 / 60 / 24)}' |

# Store number of days

dgsh-writeval -s days &

sort -k2 & # <timestamp, email>

# Place committers left/right of the median

# according to the number of their commits

awk '{print $2}' |

sort |

uniq -c |

sort -n |

awk '

BEGIN {

"dgsh-readval -l -x -q -s committers1" | getline NCOMMITTERS

l = 0; r = NCOMMITTERS;}

{print NR % 2 ? l++ : --r, $2}' |

sort -k2 & # <left/right, email>

}} |

# Join committer positions with commit time stamps

# based on committer email

join -j 2 | # <email, timestamp, left/right>

# Order by timestamp

sort -k 2n |

tee |

{{

# Create portable bitmap

echo 'P1' &

{{

dgsh-readval -l -q -s committers2 &

dgsh-readval -l -q -s days &

}} |

cat |

tr '\n' ' ' |

awk '{print $1, $2}' &

perl -na -e '

BEGIN { open(my $ncf, "-|", "dgsh-readval -l -x -q -s committers3");

$ncommitters = <$ncf>;

@empty[$ncommitters - 1] = 0; @committers = @empty; }

sub out { print join("", map($_ ? "1" : "0", @committers)), "\n"; }

$day = int($F[1] / 60 / 60 / 24);

$pday = $day if (!defined($pday));

while ($day != $pday) {

out();

@committers = @empty;

$pday++;

}

$committers[$F[2]] = 1;

END { out(); }

' &

}} |

cat |

# Enlarge points into discs through morphological convolution

pgmmorphconv -erode <(

cat <<EOF

P1

7 7

1 1 1 0 1 1 1

1 1 0 0 0 1 1

1 0 0 0 0 0 1

0 0 0 0 0 0 0

1 0 0 0 0 0 1

1 1 0 0 0 1 1

1 1 1 0 1 1 1

EOF

) |

tee |

{{

# Full-scale image

pnmtopng >large.png &

# A smaller image

pamscale -width 640 |

pnmtopng >small.png &

}}

# Close dgsh-writeval

#dgsh-readval -l -x -q -s committers

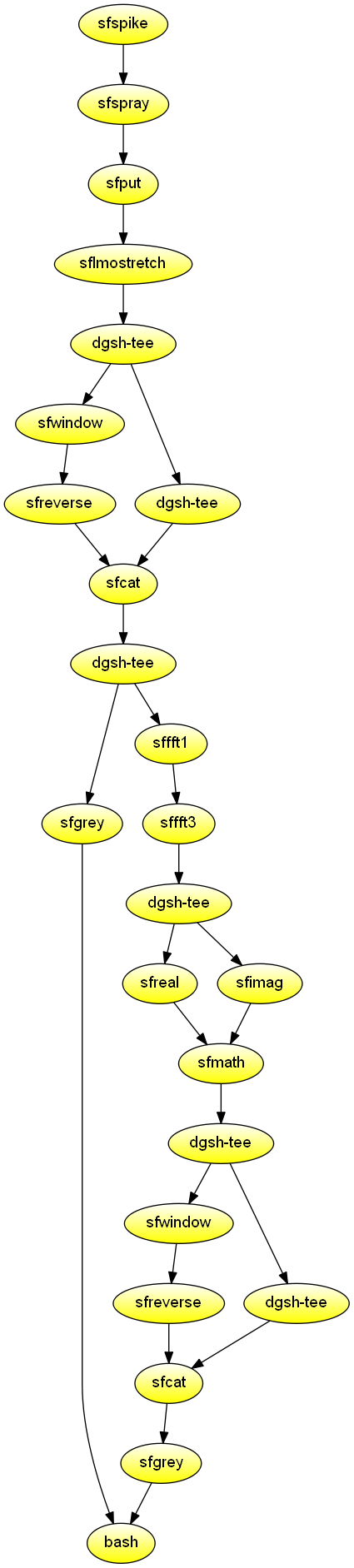

Waves: 2D Fourier transforms

Create two graphs: 1) a broadened pulse and the real part of its 2D Fourier transform, and 2) a simulated air wave and the amplitude of its 2D Fourier transform. Demonstrates using the tools of the Madagascar shared research environment for computational data analysis in geophysics and related fields. Also demonstrates the use of two scatter blocks in the same script, and the used of named streams.

#!/usr/bin/env dgsh

mkdir -p Fig

# The SConstruct SideBySideIso "Result" method

side_by_side_iso()

{

vppen size=r vpstyle=n gridnum=2,1 /dev/stdin $*

}

export -f side_by_side_iso

# A broadened pulse and the real part of its 2D Fourier transform

sfspike n1=64 n2=64 d1=1 d2=1 nsp=2 k1=16,17 k2=5,5 mag=16,16 \

label1='time' label2='space' unit1= unit2= |

sfsmooth rect2=2 |

sfsmooth rect2=2 |

tee |

{{

sfgrey pclip=100 wanttitle=n &

#dgsh-writeval -s pulse.vpl &

sffft1 |

sffft3 axis=2 pad=1 |

sfreal |

tee |

{{

sfwindow f1=1 |

sfreverse which=3 &

cat &

#dgsh-tee -I |

#dgsh-writeval -s ft2d &

}} |

sfcat axis=1 "<|" | # dgsh-readval

sfgrey pclip=100 wanttitle=n \

label1="1/time" label2="1/space" &

#dgsh-writeval -s ft2d.vpl &

}} |

call 'side_by_side_iso "<|" \

yscale=1.25 >Fig/ft2dofpulse.vpl' &

# A simulated air wave and the amplitude of its 2D Fourier transform

sfspike n1=64 d1=1 o1=32 nsp=4 k1=1,2,3,4 mag=1,3,3,1 \

label1='time' unit1= |

sfspray n=32 d=1 o=0 |

sfput label2=space |

sflmostretch delay=0 v0=-1 |

tee |

{{

sfwindow f2=1 |

sfreverse which=2 &

cat &

#dgsh-tee -I | dgsh-writeval -s air &

}} |

sfcat axis=2 "<|" |

tee |

{{

sfgrey pclip=100 wanttitle=n &

#| dgsh-writeval -s airtx.vpl &

sffft1 |

sffft3 sign=1 |

tee |

{{

sfreal &

#| dgsh-writeval -s airftr &

sfimag &

#| dgsh-writeval -s airfti &

}} |

sfmath nostdin=y re=/dev/stdin im="<|" output="sqrt(re*re+im*im)" |

tee |

{{

sfwindow f1=1 |

sfreverse which=3 &

cat &

#dgsh-tee -I | dgsh-writeval -s airft1 &

}} |

sfcat axis=1 "<|" |

sfgrey pclip=100 wanttitle=n label1="1/time" \

label2="1/space" &

#| dgsh-writeval -s airfk.vpl

}} |

call 'side_by_side_iso "<|" \

yscale=1.25 >Fig/airwave.vpl' &

#call 'side_by_side_iso airtx.vpl airfk.vpl \

wait

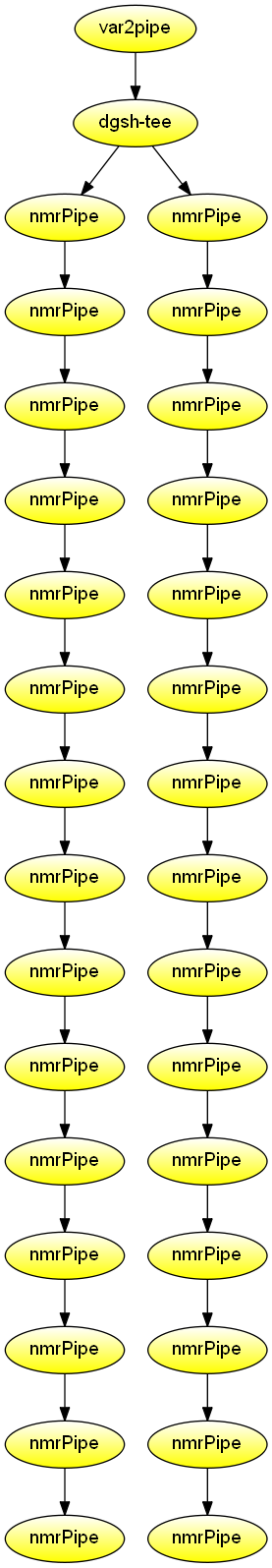

Nuclear magnetic resonance processing

Nuclear magnetic resonance in-phase/anti-phase channel conversion and processing in heteronuclear single quantum coherence spectroscopy. Demonstrate processing of NMR data using the NMRPipe family of programs.

#!/usr/bin/env dgsh

# The conversion is configured for the following file:

# http://www.bmrb.wisc.edu/ftp/pub/bmrb/timedomain/bmr6443/timedomain_data/c13-hsqc/june11-se-6426-CA.fid/fid

var2pipe -in $1 \

-xN 1280 -yN 256 \

-xT 640 -yT 128 \

-xMODE Complex -yMODE Complex \

-xSW 8000 -ySW 6000 \

-xOBS 599.4489584 -yOBS 60.7485301 \

-xCAR 4.73 -yCAR 118.000 \

-xLAB 1H -yLAB 15N \

-ndim 2 -aq2D States \

-verb |

tee |

{{

# IP/AP channel conversion

# See http://tech.groups.yahoo.com/group/nmrpipe/message/389

nmrPipe |

nmrPipe -fn SOL |

nmrPipe -fn SP -off 0.5 -end 0.98 -pow 2 -c 0.5 |

nmrPipe -fn ZF -auto |

nmrPipe -fn FT |

nmrPipe -fn PS -p0 177 -p1 0.0 -di |

nmrPipe -fn EXT -left -sw -verb |

nmrPipe -fn TP |

nmrPipe -fn COADD -cList 1 0 -time |

nmrPipe -fn SP -off 0.5 -end 0.98 -pow 1 -c 0.5 |

nmrPipe -fn ZF -auto |

nmrPipe -fn FT |

nmrPipe -fn PS -p0 0 -p1 0 -di |

nmrPipe -fn TP |

nmrPipe -fn POLY -auto -verb >A &

nmrPipe |

nmrPipe -fn SOL |

nmrPipe -fn SP -off 0.5 -end 0.98 -pow 2 -c 0.5 |

nmrPipe -fn ZF -auto |

nmrPipe -fn FT |

nmrPipe -fn PS -p0 177 -p1 0.0 -di |

nmrPipe -fn EXT -left -sw -verb |

nmrPipe -fn TP |

nmrPipe -fn COADD -cList 0 1 -time |

nmrPipe -fn SP -off 0.5 -end 0.98 -pow 1 -c 0.5 |

nmrPipe -fn ZF -auto |

nmrPipe -fn FT |

nmrPipe -fn PS -p0 -90 -p1 0 -di |

nmrPipe -fn TP |

nmrPipe -fn POLY -auto -verb >B &

}}

# We use temporary files rather than streams, because

# addNMR mmaps its input files. The diagram displayed in the

# example shows the notional data flow.

addNMR -in1 A -in2 B -out A+B.dgsh.ft2 -c1 1.0 -c2 1.25 -add

addNMR -in1 A -in2 B -out A-B.dgsh.ft2 -c1 1.0 -c2 1.25 -sub

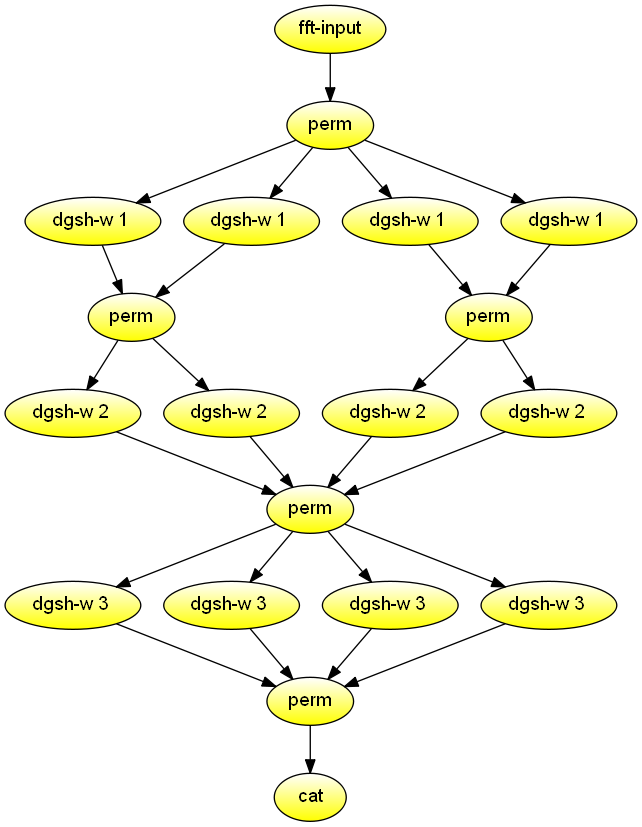

FFT calculation

Calculate the iterative FFT for n = 8 in parallel. Demonstrates combined use of permute and multipipe blocks.

#!/usr/bin/env dgsh

fft-input $1 |

perm 1,5,3,7,2,6,4,8 |

{{

{{

w 1 0 &

w 1 0 &

}} |

perm 1,3,2,4 |

{{

w 2 0 &

w 2 1 &

}} &

{{

w 1 0 &

w 1 0 &

}} |

perm 1,3,2,4 |

{{

w 2 0 &

w 2 1 &

}} &

}} |

perm 1,5,3,7,2,6,4,8 |

{{

w 3 0 &

w 3 1 &

w 3 2 &

w 3 3 &

}} |

perm 1,5,2,6,3,7,4,8 |

catManage results

Combine, update, aggregate, summarise results files, such as logs. Demonstrates combined use of tools adapted for use with dgsh: sort, comm, paste, join, and diff.

#!/usr/bin/env dgsh

PSDIR=$1

cp $PSDIR/results $PSDIR/res

# Sort result files

{{

sort $PSDIR/f4s &

sort $PSDIR/f5s &

}} |

# Remove noise

comm |

{{

# Paste to master results file

paste $PSDIR/res > results &

# Join with selected records

join $PSDIR/top > top_results &

# Diff from previous results file

diff $PSDIR/last > diff_last &

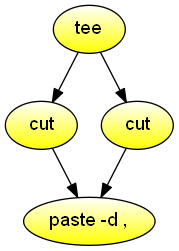

}}Reorder columns

Reorder columns in a CSV document. Demonstrates the combined use of tee, cut, and paste.

#!/usr/bin/env dgsh

tee |

{{

cut -d , -f 5-6 - &

cut -d , -f 2-4 - &

}} |

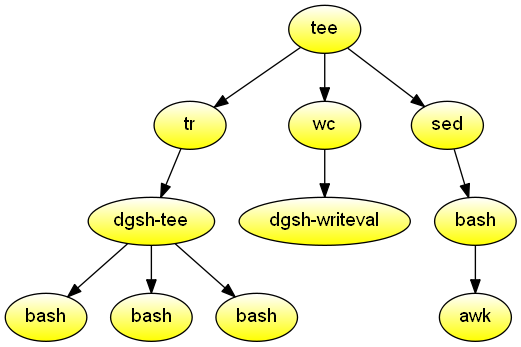

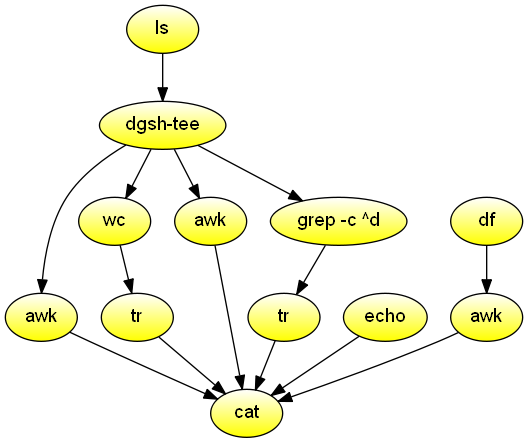

paste -d ,Directory listing

Windows-like DIR command for the current directory.

Nothing that couldn't be done with ls -l | awk.

Demonstrates combined use of stores and streams.

#!/usr/bin/env dgsh

FREE=`df -h . | awk '!/Use%/{print $4}'`

ls -n |

tee |

{{

# Reorder fields in DIR-like way

awk '!/^total/ {print $6, $7, $8, $1, sprintf("%8d", $5), $9}' &

# Count number of files

wc -l | tr -d \\n &

# Print label for number of files

echo -n ' File(s) ' &

# Tally number of bytes

awk '{s += $5} END {printf("%d bytes\n", s)}' &

# Count number of directories

grep -c '^d' | tr -d \\n &

# Print label for number of dirs and calculate free bytes

echo " Dir(s) $FREE bytes free" &

}} |

cat